Convert CSV and JSON to optimized analytics-ready Parquet.

Fast. Simple. Scalable.

Purpose-built and lightweight, that runs inside your cloud or data environment, without ETL pipelines.

We offer private AMI deals today via direct contract, with AWS Marketplace private offers available once our listing is approved.

Are your datasets getting expensive to store and query?

Storage costs keep growing.

Query costs increase with scanned bytes.

Transfers and reads take longer.

Are CSV and JSON slowing down analytics?

Queries take longer as data grows.

Text formats force engines to read and parse more data.

Costs rise with scanned bytes.

In query engines that support it, Parquet enables predicate pushdown and column pruning, which can significantly reduce scanned data and improve query performance.

Is Schema handling slowing you down?

CSV and JSON structures can drift over time.

Manual schema maintenance is brittle.

Mismatched fields can break downstream jobs.

Are ETL tools overkill for simple format conversion?

Complex pipelines for a straightforward task.

Operational overhead you may not need.

Longer setup and maintenance cycles.

Why Parquet Format?

Parquet is a columnar storage file format that offers several advantages over traditional row-based formats like CSV and JSON, especially for big data processing and analytics:

- Efficient Data Compression

- Faster Query Performance

- Schema Evolution

- Optimized for Big Data Frameworks

- Reduced I/O Operations

These benefits make Parquet an ideal choice for data warehousing, analytics, and machine learning workloads, where performance and storage efficiency are critical.

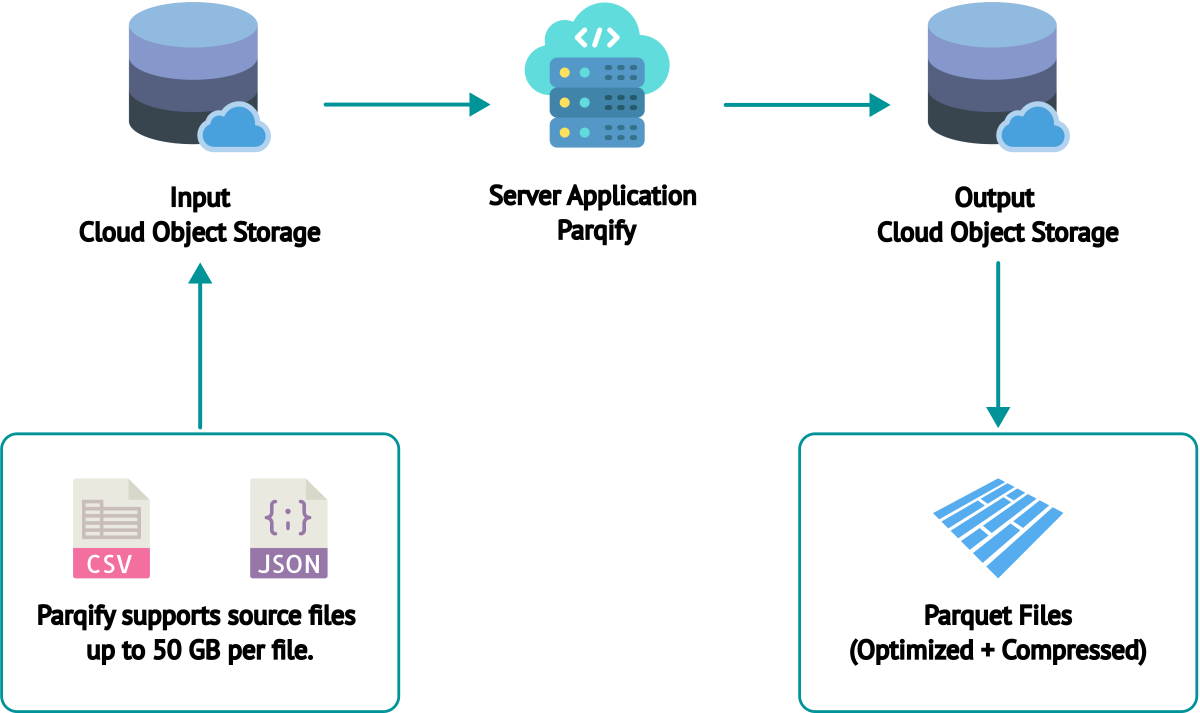

How Parqify Works

Parqify provides a server application, packaged as an AMI, that customers can deploy within their cloud environment.

This server reads CSV and JSON files from a specified cloud object storage, converts them to the Parquet format, and then writes the converted files back to a destination storage location.

Parqify supports AWS S3.

🚀 Optimized for Conversion — Not General ETL

Parqify uses a lightweight streaming pipeline designed specifically for cloud object storage → Parquet conversion.

Unlike Spark-based tools, it avoids cluster startup, staging datasets, and JVM overhead. Files are streamed directly from cloud object storage into Parquet writers with column-aware buffering and parallel IO.

The result:

- 🚀 Faster startup

- 🚀 Lower memory usage

- 🚀 Fewer storage operations

- 🚀 Smaller Parquet output

- 🚀 Better performance for cloud analytics engines and data warehouses

Perfect for anyone who just needs Parquet — without building ETL infrastructure.

Features

Parallel Processing

Process large datasets efficiently by converting multiple files concurrently.

Runs in Your Cloud Environment

All processing happens inside your cloud infrastructure.

No data leaves your infrastructure.

No data is sent to third-party services.

Friendly Web UI

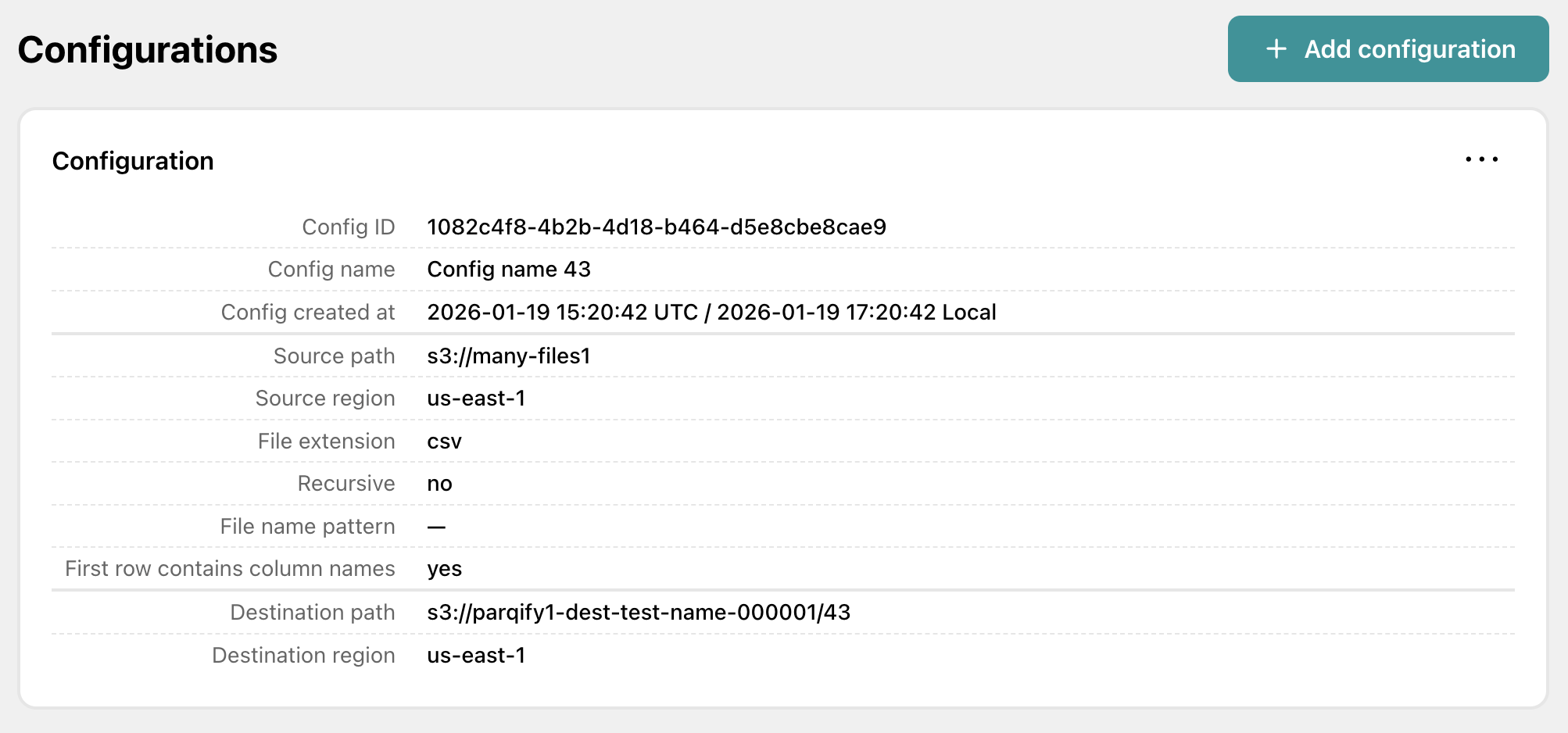

Create, edit, import/export conversion configs.

Configure source and destination storage locations, file names, prefixes, and other conversion parameters through a simple web interface.

Scalability

Scale the cloud instance size based on your dataset size and performance requirements.

Easy Deployment

Deploy Parqify through your cloud provider's marketplace.

Ready to get started?

Launch on AWS MarketplaceScreenshots

Quick Start

Get started in minutes:

- ✔️ Launch Parqify from AWS Marketplace

- ✔️ Open browser to instance IP

- ✔️ Create your first conversion